StrataConf Insider Tweets

StrataConf 2014 was from Feb 11-13, with focus on tools, techniques and learnings for Big Data insights and visualizations. We thought it might be interesting (and oh so meta!) to gather the public activity during the conference and decide which sessions generated interest on twitter.

Collection

First off, we are not talking Big Data. Neither are we doing all-out surveillance. Our starting point was a list of 200+ speakers and panelists.

The idea and the plan came together at the last-minute. By the time twitter monitoring was in place, the tweets for the morning of Feb 12 were past the timeline limits. Over roughly 47 hours, we had collected around 2500 tweets from this group of Strata insiders.

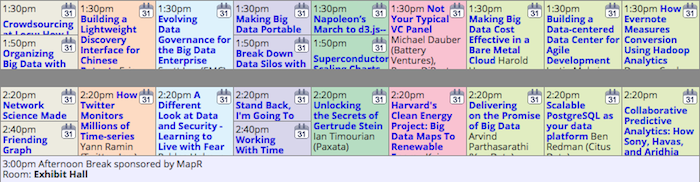

The Strata schedule has around nine parallel tracks at most times. We grabbed the .ics file to gather start and end times, and used the full listings to get the ratings information.

Tweets By Time

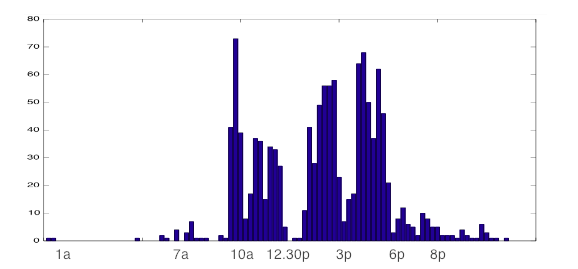

Here is a histogram of tweets over a two-day period with bin-size of 15-minutes. Caveat: Data before noon is underrepresented.

Clearly, a lot of tweets happen during and just after keynotes. Tweets really pick up steam around 2p and 5p. Surprisingly, the insiders tweet less during breaks and very little during lunch. One can imagine that hunger, thirst, bodily needs and in-person social conversations trump tweeting.

Finding the Most Tweeted Sessions

Obviously, tweets cannot be related to the session based on time. In addition to the multiplicity of simultaneous tracks, a lot of tweets are not about a particular session. Some thoughtful people tweet well after the session.

What we need is a way to classify a given tweet to match a session.

Features and Classification

Features for session include the title, the speaker and organization.

The session description is weighted very low (being quite monotonous

in terms such as big data, tools, real-time, cutting-edge, cloud,

technologies and such mumbo). Tweets include user mentions and

hashtags in addition to text and are filtered for #strataconf. A

modified vector-space tf-idf model was used for classification.

Sessions within the time-slot corresponding to the tweet time are

given a small boost during the matching process.

Methodology and Error

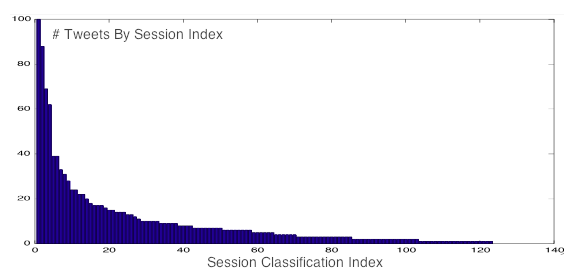

The classification code was hacked up within a day and half. A random 10% of the tweets were used in fine-tuning the model weights with statistical checks. The resulting classes were sorted by membership count and the top and bottom 10 ranked sessions were manually scanned for mismatched tweets and error counts.

Precision as measured in the top 10 sessions is over 95%, while recall is less at an estimated 85%. However, the distribution and the ranking of the sessions will hold to a high degree of confidence.

Classification Results

Of the 151 distinct sessions, at least 120 were associated with one or more tweets. Two sessions proved to be troubling catch-all for tweets (Club Strata and Great Debate) and have been removed from the list with due mention.

Sessions Most Tweeted By Strata Insiders

The list.

- The Future Isn’t What it Used to Be, James Burke (100+)

- Probabilistic Programming - What, Why, How, and When, Beau Cronin (69)

- Bedtime Stories - Learning from Sleep Data, Monica Rogati (62)

- Chicago Bars, Prisoner’s Dilemma, and Practical Models in Search, Chris Harland

- Survivorship Bias and the Psychology of Luck, David McRaney

- Graph All The Things! 11 Graph Data Use Cases That Aren’t Social, Emil Eifrem

- Agile Analytics, Neal Ford

- Data Transformation - A User-Centric Approach to Accessing and Analyzing Big Data, Joe Hellerstein

- Driving the Future of Smart Cities - How to Beat the Traffic, Ian Huston

- Movie Reconstruction from Brain Signals - “Mind-Reading”, Bin Yu

- The Sidekick Pattern Using Small Data to Increase the Value of Big Data, Abe Gong

- Thursday Keynote Welcome, Alistair Croll

- Keynote with Ben Fry, Ben Fry

- Working With Time Series Data Using Apache Cassandra, Patrick McFadin

- Information Visualization for Large-Scale Data Workflows, Michael Conover

- Stand Back, I’m Going To Try Science!, Rachel Poulsen

- The Last Mile - Challenges and Opportunities in Data Tools, Wes McKinney

- Organizing Big Data with the Crowd, Lukas Biewald

- How Twitter Monitors Millions of Time-series, Yann Ramin

- The Urgent Need to Appify Big Data, Ryan Cunningham

- Expressing Yourself in R, Hadley Wickham

- MLbase Distributed Machine Learning Made Easy, Ameet Talwalkar

A Few Tweets

Embedded here to provide a flavor of the classification problem.

There goes the epistemological neighborhood. -- james burke #strataconf

— Joe Hellerstein (@joe_hellerstein) February 13, 2014Lispers at #datascience #strataconf : could I do the @beaucronin Church lisp example in #clojure instead? Libs ported there? If not, I might

— Russell Whitaker (@OrthoNormalRuss) February 13, 2014Lucky people are open to new experiences, easily abandon routines, fail often, shrug off failure, try again. @davidmcraney #strataconf

— Rachel Kalmar (@grapealope) February 14, 2014Excited to learn about how my data (and lack of sleep) is being used in Data Science. #strataconf @mrogati pic.twitter.com/pTEiEtdBXu

— Noelle Sio (@noellesio) February 13, 2014And the winner for #strataconf best talk title is... Chicago Bars, Prisoner’s Dilemma, and Practical Models in Search http://t.co/XULQx96Kt7

— Daniel Tunkelang (@dtunkelang) February 13, 2014More

More can be done better, automatically. Let us know if you find this useful, or would like to see this kind of analysis in some other context.

Inform Your Interests

Stay posted on stories, trends and topics of interest.